|

OData is a widely accepted open standard for data access over the Internet. Skyvia allows you to easily expose your Amazon Redshift data via OData RESTful API for data access and manipulation. OData More Than Just REST.

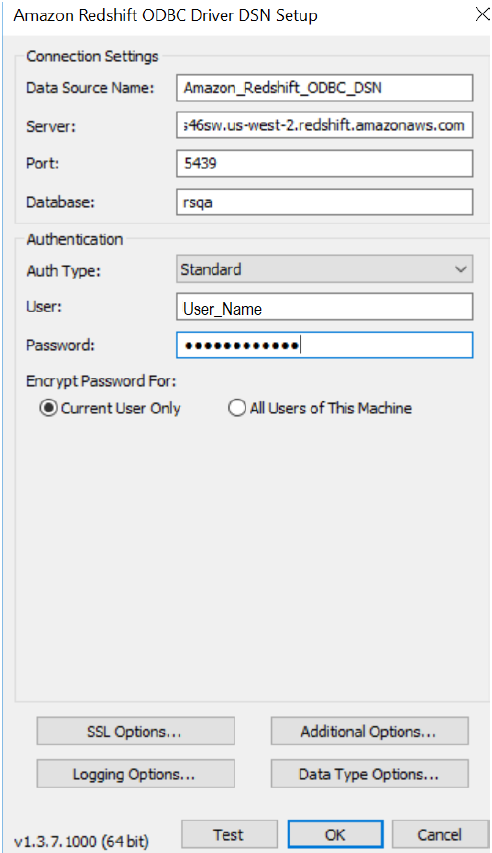

Configuring Excel For Redshift Data Warehouse Software Component EnablingCompare the best Data Extraction Software in the UK. Microsoft Excel features calculations, graphing tools, pivot tables, and a macro programming language that allows users to work with data in many of the ways that suit their needs, whether on. This blog is intended to give an overview of the considerations you’ll want to make as you build your Redshift data warehouse to ensure you are getting the optimal performance.Create a Data Source Name in iODBC with the CData ODBC Driver for Microsoft OneDrive and work with Microsoft OneDrive data in Microsoft Excel on Mac OS X. A JDBC driver is a software component enabling a Java application to interact with a database.Having seven years of experience with managing Redshift, a fleet of 335 clusters, combining for 2000+ nodes, we (your co-authors Neha, Senior Customer Solutions Engineer, and Chris, Analytics Manager, here at Sisense) have had the benefit of hours of monitoring their performance and building a deep understanding of how best to manage a Redshift cluster. IDashboards is able to connect to an Amazon Redshift Data Warehouse by using their proprietary JDBC driver.An OLAP database is best for situations where you read from the database more often than you write to it. Their data resides in S3, in a directory of JSON logs on user activity on the app, as well as a directory with JSON metadata on the songs in their app.First, we’ll dive into the two types of databases: OLAP (Online Analytical Processing) and OLTP (Online Transaction Processing). A music streaming startup, Sparkify, has grown their user base and song database and want to move their processes and data onto the cloud. So let’s dive in! OLTP vs OLAPProject 3: Data Warehouse with Amazon Redshift. Fundamentally they are different than transactional databases we’ve seen in the past, and before we jump into how to build your data warehouse, it’s important to understand that distinction. Use our filters.Redshift, like BigQuery and Snowflake, is a cloud-based distributed multi-parallel processing (MPP) database, built for big data sets and complex analytical workflows.As the name suggests, a common use case for this is any transactional data. These insights can help identify the right technology for your data analytics use case.On the other hand, OLTP databases are great for cases where your data is written to the database as often as it is being read from it. Redshift is a type of OLAP database.In Chapter 2 of our Data Strategy guide, we review the difference between analytic and transactional databases. Roll-ups of many rows of data). OLAP databases excel at queries that require large table scans (e.g.

This selection will be the biggest driver for the performance of your warehouse, so you’ll want to consider the end user’s needs when making this decision.Redshift offers four options for node types that are split into two categories: dense compute and dense storage. Selecting the Right NodesThe first step in setting up your Redshift cluster is selecting which type of nodes you’ll want to use. Choosing a ClusterAs your warehouse will be the central point from which you deliver data to your business, it is important to choose the storage and performance levels that will match the needs of your business. We will review these below.

For example, if you are using Redshift solely for analytics purposes, you can scale the cluster up with more nodes when this happens and resume work once it is complete. Depending on the purpose of the Redshift cluster, degradation in performance can be extremely painful. This overhead is consumed by ETL jobs rebuilding tables, complex queries that write temp tables to disk during execution, copying uncompressed data, and spikes in source data volume. You’ll want to consider your total data volume as it will be stored on the cluster, and add an additional 30-40% of space. The number of nodes you have will control how much storage space you have available in your cluster, and as such your data volume will drive this decision.Across the Sisense redshift fleet, we’ve found that at about 80% disk consumption, you begin to see a degradation in query performance. WLM can be thought of as “how many processes can be managed by a cluster at one time.” It allows for control over how much of the clusters compute resources can be allocated to a query or set of queries being run at a given time. Cluster Performance Configurations WLMOne of the main settings to configure is your WLM (workload management). With that in mind, consider your use case when planning for cluster sizes. Dashboards), it can leave your consumers frustrated with their experience. Short Query AccelerationLong queries can hold up analytics by preventing shorter, faster queries from returning as they get queued up behind the long-running queries. Using a WLM allows for control over query concurrency as well.Finding the best WLM that works for your use case may require some tinkering, many land between the 6-12 range. For your reporting users, it may be fine to support higher concurrency, but for transformation workloads, it may be best to only have a single transformation running at a given time. In this way, you are able to cap specific workflows ability to hog compute resources.WLM also allows for controlling the number of queries being run for a specific user or user group. Using WLMs you can assign queries from the ETL user or a user group to a specific WLM that only can consume a limited percentage of the cluster’s available compute resources. This can have an impact on your other workflows like ad-hoc analysis or BI reporting. But a business could very well have a scenario where they need more compute power and higher concurrency to process a backlog of queries, but not necessarily more storage. Concurrency ScalingTypically, when a Redshift cluster is upsized, both the compute and storage resources are simultaneously upgraded. This can be used in conjunction with WLM to find the concurrency/performance that works best for you. Currently, Redshift gives the ability to spin up to 10 additional clusters (giving 11X the resources in total) with concurrency scaling.Users can track how often clusters are spinning up additional clusters due to concurrency scaling. While each instance may see different results, we have seen up to a 90%+ reduction in queue time when enabling concurrency scaling.Whenever there are more queries queued up than can be managed by WLM at a given moment, Redshift assesses whether it would be worth the overhead to spin up additional clusters to go through the queued up queries. Redshift, like many OLAP databases, wasn’t initially built for this purpose but they have built concurrency scaling to address this specific problem.If you have a case where you don’t need more storage and have peaks of usage that would require more computational resources/concurrency, Redshift’s concurrency scaling would be a good option to reduce the time spent waiting for queries to even begin running. We are now seeing these DWs process thousands of queries per day, and serving hundreds of users’ BI and reporting needs. It is no longer only a few analysts running queries against a database for one-off analytical queries. Andhra jyothi karimnagar district edition epaperModeling Your Data for Performance Data architectureThe data landscape has changed significantly over the last two decades.

0 Comments

Leave a Reply. |

AuthorKimberly ArchivesCategories |

RSS Feed

RSS Feed